Join the EulerFold community

Track progress and collaborate on roadmaps with students worldwide.

Chain of Thought Prompting: Eliciting Reasoning in Large Language Models

Wei, J., et al. (2022). Chain-of-thought prompting elicits reasoning in large language models. NeurIPS.

Read Original Paper

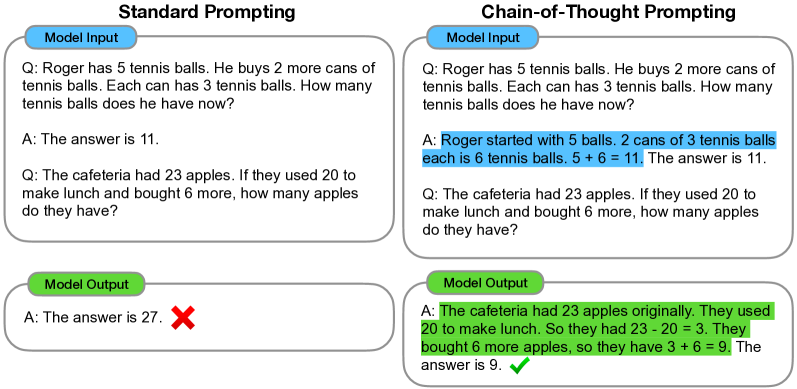

The 2022 "Chain of Thought" (CoT) paper introduced a fundamental structural shift in the way Large Language Models (LLMs) are used to solve complex problems. Before this work, standard few-shot prompting relied on direct input-output mapping, forcing the model to solve multi-step logic in a single computational leap. Researchers at Google demonstrated that by simply prompting the model to generate intermediate reasoning steps, they could unlock latent capabilities in arithmetic, symbolic logic, and commonsense reasoning. This discovery moved the field from viewing LLMs as associative memory engines to viewing them as sequential logical processors.

The Arithmetic Wall

The primary motivation for Chain of Thought was the consistent failure of even the largest models to solve grade-school level math word problems. In standard prompting, a model is given a question and expected to produce the final answer immediately. For a multi-step problem, this requires the model to perform all internal logic within the fixed depth of its layers before generating the first output token. CoT resolves this by allowing the model to allocate "token-compute" to each logical step. By writing out the intermediate reasoning, the model effectively uses the sequence length as an external working memory, ensuring that the input to each subsequent step is grounded in the results of the previous one.

The 100B Emergence Threshold

A critical finding of the paper was that Chain of Thought is an "emergent" property - it is not present in smaller models and only materializes once architectures cross a specific size threshold, typically around 100 billion parameters. In models like PaLM (540B), CoT leads to a dramatic performance leap; on the GSM8K benchmark, accuracy jumped from 17.9% with standard prompting to 58.1% with CoT. Conversely, in models smaller than 10B parameters, CoT often degrades performance, as the model generates "fluent hallucinations" - reasoning traces that sound logical but are riddled with arithmetic errors that mislead the final prediction.

Zero-Shot CoT: "Let's Think Step by Step"

While the original paper focused on few-shot prompting, subsequent research (Kojima et al., 2022) revealed that CoT reasoning can be triggered without any examples at all. By simply appending the phrase "Let's think step by step" to a prompt, models exhibit a similar jump in reasoning quality. For PaLM 540B, this "zero-shot" nudge increased accuracy on math tasks from 12.5% to over 40%. This suggests that the capacity for structured logic is already present in the pre-trained weights of large models, but it remains "dormant" until a specific linguistic trigger activates the sequential reasoning path.

Self-Consistency: Voting on Thought Traces

The reasoning chains generated by CoT are not always perfect; a model might make a calculation error in one path but find the correct logic in another. This led to the development of Self-Consistency, an extension where the model generates multiple, independent chain-of-thought traces for the same problem. By taking a majority vote over the final answers across these different traces, performance is boosted even further. In PaLM 540B, adding self-consistency to CoT pushed GSM8K accuracy from 58.1% to 74.4%, proving that the diversity of potential reasoning paths can be used to "filter" out individual logical failures.

Decomposition and Interpretability

Beyond raw performance, Chain of Thought introduced a new level of interpretability to the "black box" of neural networks. Because the model externalizes its logic, researchers can pinpoint exactly where a reasoning chain broke down - whether it was a failure in arithmetic, a misunderstanding of the prompt, or a hallucinated fact. This transparency is vital for aligning models with human logic, as it allows developers to debug the model's "thought process" rather than just its final output. It established language as the universal interface for both giving instructions and verifying the machine's path to the solution.

Dive Deeper

Chain-of-Thought Prompting Elicits Reasoning in LLMs (Original Paper)

arXiv • article

Explore ResourceGoogle Research: Language Models Cause Reasoning

Google Research • article

Explore ResourceZero-shot CoT: Let's Think Step by Step

arXiv • article

Explore ResourceSelf-Consistency Improves CoT Reasoning

arXiv • article

Explore Resource

Discussion

0Join the discussion

Sign in to share your thoughts and technical insights.

Loading insights...

Recommended Readings

The author of this article utilized generative AI (Google Gemini 3.1 Pro) to assist in part of the drafting and editing process.