Join the EulerFold community

Track progress and collaborate on roadmaps with students worldwide.

DQN: Playing Atari with Deep RL

Mnih, V., Kavukcuoglu, K., Silver, D., Graves, A., Antonoglou, I., Wierstra, D., & Riedmiller, M. (2013). Playing atari with deep reinforcement learning. arXiv preprint arXiv:1312.5602.

Read Original Paper

The 2013 Deep Q-Network (DQN) paper from DeepMind demonstrated that a single AI agent could learn to play a variety of Atari 2600 games directly from raw pixels. Before this, reinforcement learning often required manual feature engineering to represent the state of the environment. The researchers proposed a method that combined Q-learning with deep neural networks, allowing the agent to discover its own features. It was a proof of concept that high-dimensional sensory input could be mapped directly to successful actions.

Experience Replay and Data Decorrelation

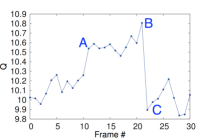

Visualization of the predicted value function for a segment of the game Seaquest.

A primary challenge in combining deep learning with reinforcement learning is that the data is highly correlated and non-stationary. As an agent moves through an environment, its successive states are mathematically similar, which violates the independent and identically distributed (i.i.d.) assumption required for most neural network training. To solve this, DQN introduced "experience replay," where the agent's experiences - tuples of state, action, reward, and next state - are stored in a large buffer. During training, the network is updated using a random sample from this buffer rather than the immediate sequence of experience. This engineering choice effectively "breaks" the correlations in the data, allowing the model to learn from a more stable and diverse distribution of events.

Deep Q-Learning and Function Approximation

DQN demonstrated that high-dimensional sensory input can be mapped directly to successful actions by using a deep convolutional neural network as a function approximator for the Q-value function. The Q-value represents the expected future reward for taking a specific action in a specific state. Because the state space of an Atari game is essentially infinite, the network must learn to generalize from raw pixels to abstract concepts like "distance to a paddle" or "remaining lives." By optimizing the Bellman equation - a recursive identity that relates current value to future reward - the network iteratively refines its estimates of the optimal strategy. This finding proved that deep learning could be used to solve the fundamental "credit assignment problem" in complex, unpredictable environments.

Target Networks and Optimization Stability

To further stabilize the training process, the DQN architecture utilizes a separate "target network" to calculate the expected future rewards. In standard Q-learning, the network's targets are constantly shifting as the weights are updated, leading to oscillations or divergence in the learning process. By holding the target network's weights fixed for several thousand steps, DQN provides a stationary goal for the optimization algorithm. This separation of "acting" and "estimating" proved to be a critical technical innovation, allowing the agent to discover its own features from raw pixels without the risk of catastrophic forgetting. It demonstrated that stability in reinforcement learning is a function of how the target objective is scheduled.

The Sensory Limit and Temporal Abstraction

Despite its success, DQN revealed limitations in how agents perceive time and context. Because the network was typically trained on a stack of four recent frames, its "memory" was limited to a fraction of a second. This made it struggle with games that required long-term planning, hidden state tracking, or temporal abstraction. This highlighted a fundamental question in AI: is intelligence primarily about reacting to the present moment, or about building an internal model of the world that persists over time? This limitation suggested that the next leap in agentic AI would require more complex architectures - such as recurrent networks or transformers - that can handle the dependencies of long-horizon tasks.

Generalization Across Diverse Domains

The most profound abstraction in the DQN paper was the demonstration of an agent that could learn to play multiple, vastly different games using the same architecture and hyperparameters. The agent was not "told" the rules of Pong, Breakout, or Seaquest; it discovered them through trial and error. This finding effectively launched the field of Deep Reinforcement Learning, proving that a single, unified objective - the maximization of reward - is sufficient to drive the emergence of complex, domain-specific skills. It remains the foundational assumption of all modern autonomous systems, suggesting that the "intelligence" of a system is a property of its learning algorithm rather than its pre-programmed knowledge.

Dive Deeper

DeepMind DQN Blog

DeepMind • article

Explore ResourceDQN Paper Explained

Towards Data Science • article

Explore Resource

Discussion

0Join the discussion

Sign in to share your thoughts and technical insights.

Loading insights...

Recommended Readings

The author of this article utilized generative AI (Google Gemini 3.1 Pro) to assist in part of the drafting and editing process.