Join the EulerFold community

Track progress and collaborate on roadmaps with students worldwide.

ResNet: Deep Residual Learning and the Breakthrough of Identity Mapping

He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep residual learning for image recognition. CVPR.

Read Original Paper

The 2015 introduction of the Deep Residual Network, or ResNet, resolved one of the most persistent obstacles in deep learning: the degradation problem. Before ResNet, adding more layers to a neural network often led to a paradoxical increase in training error, even when the additional layers should have theoretically been able to learn a simple identity mapping. By introducing the "skip connection," ResNet allowed models to scale to hundreds or even thousands of layers, fundamentally shifting the focus of architecture design from raw capacity to the fluidity of gradient flow.

The Degradation Problem vs. Vanishing Gradients

While the "vanishing gradient" problem is often cited as the limit of deep networks, ResNet addressed a different, more subtle phenomenon known as degradation. In a degrading network, accuracy begins to saturate and then drop rapidly as depth increases, even though the model has more parameters to work with. This is not the result of overfitting, as the training error itself rises. The researchers at Microsoft realized that the optimization landscape of a deep network becomes exponentially more difficult to navigate as layers are added. Specifically, it is easier for a network to learn a "residual" deviation from an identity mapping than it is to learn the identity mapping itself from scratch through a stack of non-linear transformations.

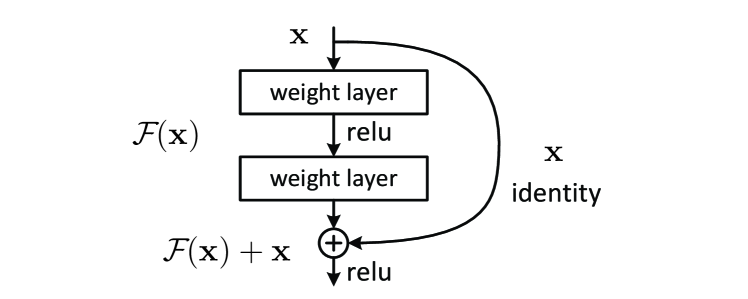

The Residual Mapping: H(x) = F(x) + x

The core mathematical innovation of ResNet is the reformulation of the learning objective. Instead of tasking a stack of layers with learning the desired underlying mapping , the researchers forced the layers to learn a residual function . The original mapping is then reconstructed by adding the input directly to the output of the layers: .

This adjustment ensures that the "default" behavior of a residual block is an identity mapping. If the weight layers are initialized to zero, the block simply passes the signal through unchanged. This simple addition () provides the optimization algorithm with a powerful structural prior, allowing it to focus only on the small, incremental changes required to improve the representation at each stage.

Identity Shortcuts: The Highway to Stability

The physical realization of this math is the "shortcut connection" (or skip connection). These connections bypass one or more layers, performing an element-wise addition of the input and the processed output. Because these shortcuts are "identity" connections, they add neither extra parameters nor computational complexity. During backpropagation, these shortcuts act as a "highway" for the gradient, allowing the signal from the loss function to reach the earliest layers of the network without being diluted by the non-linearities and weights of the intermediate blocks. This architectural transparency is what enabled ResNet to scale to 152 layers - nearly ten times deeper than the previous state-of-the-art models like VGG.

Building Blocks vs. Bottleneck Blocks

To scale the architecture to extreme depths without an explosion in computational cost, ResNet introduced two types of building blocks. For shallower networks (e.g., ResNet-18 or ResNet-34), a simple block consisting of two 3x3 convolutional layers is used. For deeper networks (e.g., ResNet-50, 101, and 152), a "bottleneck" design is employed.

The bottleneck block uses a stack of three layers: a 1x1 convolution to reduce the dimensionality of the input (creating the "bottleneck"), a 3x3 convolution to process the features, and a final 1x1 convolution to restore the original dimensionality before the skip connection addition. This design significantly reduces the number of parameters and the computational footprint of the 3x3 operation, allowing the network to allocate its "depth budget" more effectively.

ResNet-152: Deepening the Image Recognition Frontier

The impact of ResNet was immediate and comprehensive. At the 2015 ILSVRC and MS COCO competitions, ResNet-152 swept all categories, achieving a top-5 error rate of 3.57% - surpassing human performance for the first time on the ImageNet dataset. Beyond the specific scores, ResNet provided the blueprint for virtually every modern neural architecture, from the Transformers used in LLMs to the Diffusion models used in image generation. The discovery that "identity" is a powerful architectural primitive proved that in deep learning, the most effective way to learn complex patterns is to ensure that the simplest patterns are never lost.

Dive Deeper

Deep Residual Learning for Image Recognition (Original Paper)

arXiv • article

Explore ResourcePyTorch ResNet Implementation Guide

PyTorch • docs

Explore ResourceResNet Explained (Dive into Deep Learning)

D2L • docs

Explore ResourceCVPR 2016: ResNet Presentation by Kaiming He

YouTube • video

Explore Resource

Discussion

0Join the discussion

Sign in to share your thoughts and technical insights.

Loading insights...

Recommended Readings

The author of this article utilized generative AI (Google Gemini 3.1 Pro) to assist in part of the drafting and editing process.