Join the EulerFold community

Track progress and collaborate on roadmaps with students worldwide.

Stable Diffusion: Latent Space

Rombach, R., Blattmann, A., Lorenz, D., Esser, P., & Ommer, B. (2022). High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 10684-10695).

Read Original Paper

In 2021, the release of Latent Diffusion Models, later known as Stable Diffusion, solved the problem of computational cost in generative AI. While earlier diffusion models worked directly on image pixels, they were incredibly slow and resource-heavy. Researchers at LMU Munich and Runway proposed that generation should instead happen in a 'latent space' - a compressed mathematical representation of an image. It was a push to make high-quality generation accessible on consumer hardware by separating the act of creation from the act of rendering.

Diffusion in Latent Space

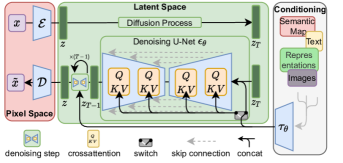

Latent Diffusion architecture showing the interaction between the autoencoder, the U-Net, and the conditioning mechanism.

Stable Diffusion resolved the computational bottleneck of high-resolution image synthesis by shifting the diffusion process from pixel space to a compressed, lower-dimensional latent space. Through the use of a pre-trained Variational Autoencoder (VAE), the model first compresses raw images into a latent representation that captures their semantic essence while discarding imperceptible details.

The subsequent denoising process occurs entirely within this latent space, guided by a cross-attention mechanism that allows the U-Net to "attend" to external conditioning signals like text embeddings. This separation of semantic creation from high-resolution rendering proved that high-fidelity synthesis is achievable on consumer-grade hardware by optimizing the mathematical manifold on which the model operates.

Classifier-Free Guidance (CFG)

A critical technical component of Stable Diffusion is Classifier-Free Guidance (CFG), the mechanism that allows users to control how strictly the model follows a prompt. During training, the model is occasionally presented with an "empty" prompt, allowing it to learn the unconditional distribution of images.

At inference time, the model calculates two trajectories: one based on the prompt and one unconditional. By extrapolating the difference between these two paths - weighted by a "CFG Scale" - the model can significantly amplify the features requested in the prompt. This finding proved that the prompt acts as a "vector" in latent space, and by increasing the CFG scale, we can steer the model toward more precise, albeit sometimes less diverse, interpretations of the text.

Universal Conditioning

The reasoning behind Stable Diffusion was the need for a more flexible way to guide the generative process. They introduced a 'cross-attention' mechanism that allows the model to be conditioned on various inputs, such as text, depth maps, or other images. This turned the diffusion model into a universal generative engine that can follow complex instructions. It revealed that generation is most powerful when it can be steered by human concepts, rather than just random noise.

ControlNet and Spatial Steering

The versatility of the latent space was further expanded by the introduction of ControlNet in 2023. ControlNet adds an auxiliary network that can "lock" the spatial structure of the generation based on specific inputs like Canny edges, depth maps, or human poses.

By freezing the weights of the main U-Net and training a trainable copy of the encoding layers, ControlNet allows for pixel-perfect control over the composition of an image. This engineering shift transformed Stable Diffusion from a simple "prompt-to-image" tool into a professional design engine, proving that generative models can be integrated into existing creative workflows that require rigorous spatial constraints.

Video Generation and Latent Consistency

The evolution of latent diffusion has moved toward the temporal and real-time domains. Models like Stable Video Diffusion (SVD) extend the 2D latent space into a 3D volume by adding temporal attention layers, allowing for the generation of coherent video frames.

Simultaneously, Latent Consistency Models (LCM) and SDXL-Turbo have reduced the number of denoising steps from 50 down to 1 or 4. By distilling the multi-step diffusion process into a single-step "consistency mapping," these models achieve near-instantaneous image generation. This revealed that the computational "tax" of diffusion can be almost entirely eliminated through clever distillation, paving the way for real-time interactive AI art and high-fidelity video synthesis.

The Efficiency Shift

The success of Stable Diffusion marked a shift in the AI industry toward efficiency and democratization. By proving that high-performance models could run on standard GPUs, the researchers catalyzed a wave of open-source innovation. This revealed that the most impactful breakthroughs are often the ones that reduce the barriers to entry. It raises the question of whether the future of AI will be defined by the size of the models or by the cleverness of the compression that makes them usable by everyone, moving the industry toward a future where creative power is accessible to all.

Dive Deeper

Stable Diffusion Blog

Stability AI • article

Explore ResourceLDM Paper on GitHub

GitHub • code

Explore Resource

Discussion

0Join the discussion

Sign in to share your thoughts and technical insights.

Loading insights...

Recommended Readings

The author of this article utilized generative AI (Google Gemini 3.1 Pro) to assist in part of the drafting and editing process.