Join the EulerFold community

Track progress and collaborate on roadmaps with students worldwide.

AlexNet: The Big Bang of Deep Learning and the ImageNet Breakthrough

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). ImageNet classification with deep convolutional neural networks. Advances in neural information processing systems, 25.

Read Original Paper

The 2012 ImageNet competition (ILSVRC) is widely regarded as the "Big Bang" of modern artificial intelligence. AlexNet, a deep convolutional neural network (CNN) developed by Alex Krizhevsky and his colleagues, won the competition by a massive margin, achieving a top-5 error rate of 15.3% - nearly 10 percentage points lower than the runner-up. This victory proved that neural networks, long dismissed as computationally impractical, were the superior path for high-dimensional pattern recognition. AlexNet provided the technical blueprint for the current era of deep learning, combining GPU-accelerated training, non-linear activations, and robust regularization techniques.

The Shift to ReLU: Speeding up Convergence

Before AlexNet, the standard activation function for neural networks was the logistic sigmoid or the hyperbolic tangent (). While these functions are biologically inspired, they suffer from the "vanishing gradient" problem: as the input becomes large, the gradient approaches zero, slowing down training to a crawl. AlexNet popularized the use of the Rectified Linear Unit (ReLU), defined as . The authors demonstrated that deep CNNs with ReLUs reached a 25% training error rate six times faster than an equivalent network using . This shift from saturating to non-saturating non-linearities was a fundamental prerequisite for training the deep architectures that follow today.

GPU Parallelization: The Twin-GTX Architecture

One of the primary bottlenecks in 2012 was the limited memory of graphics cards. The original AlexNet was so large that it could not fit on a single GPU. To solve this, the researchers split the network across two NVIDIA GTX 580 GPUs (each with 3GB of RAM). The GPUs communicated only at certain layers, effectively creating a "half-split" architecture where each card processed a different set of feature maps. This manual orchestration of hardware revealed that the future of AI was not just a mathematical challenge, but a massive engineering problem of data movement and parallelized compute.

Overlapping Pooling and Local Response Normalization

To reduce the dimensionality of the representations while preserving spatial information, AlexNet utilized overlapping pooling. Traditional pooling windows were equal to the stride (e.g., window with a stride of 2). AlexNet used a window with a stride of 2, creating an overlapping effect that the authors found made the model slightly more difficult to overfit. Additionally, they introduced Local Response Normalization (LRN), a form of lateral inhibition inspired by biological neurons. While LRN has largely been replaced by LayerNorm and BatchNorm in modern systems, it represented an early, clinical attempt to stabilize the internal distributions of a deep network.

Fighting Overfitting: Dropout and Data Augmentation

A deep model with 60 million parameters is highly susceptible to overfitting, especially when trained on the 1.2 million images of the ImageNet dataset. AlexNet introduced two critical solutions. First, it popularized Dropout, a technique where neurons are randomly "turned off" during training (with a probability of 0.5), forcing the network to learn redundant, robust representations. Second, the researchers used intensive data augmentation, including random image translations, horizontal reflections, and a PCA-based color shift (Fancy PCA). These techniques effectively increased the size of the training set by several orders of magnitude, teaching the model that the identity of an object is invariant to its position or lighting.

The Legacy of Toronto and the CNN Renaissance

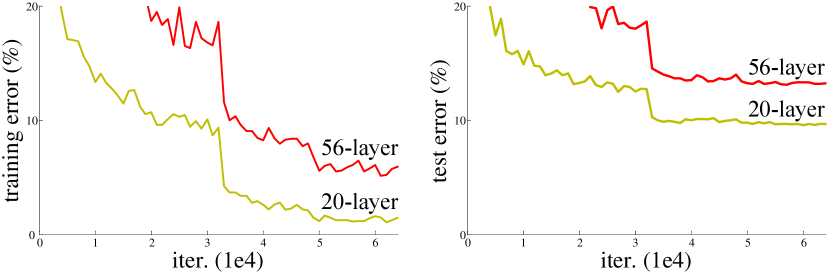

The impact of AlexNet transcended the ImageNet leaderboard. It validated the "Connectionist" view of AI and catalyzed the development of more efficient CNN architectures like VGG, GoogLeNet, and ResNet. The paper also marked the emergence of the University of Toronto as the epicenter of AI research, with co-authors Ilya Sutskever and Geoffrey Hinton going on to lead the foundational teams at OpenAI and Google. AlexNet proved that with enough data, enough compute, and the right architectural priors, machines could not just see, but understand the visual world.

Dive Deeper

ImageNet Classification with Deep CNNs (Original Paper)

NeurIPS • article

Explore ResourceDeep Learning: The ImageNet Moment

MIT Tech Review • article

Explore ResourceA Visual Guide to AlexNet Architecture

Medium • article

Explore ResourceAlexNet Research Paper Walkthrough

YouTube • video

Explore Resource

Discussion

0Join the discussion

Sign in to share your thoughts and technical insights.

Loading insights...

Recommended Readings

The author of this article utilized generative AI (Google Gemini 3.1 Pro) to assist in part of the drafting and editing process.