Join the EulerFold community

Track progress and collaborate on roadmaps with students worldwide.

YOLO: You Only Look Once and the Unified Detection Paradigm

arXiv:1506.02640 (2015)

Read Original Paper

Before the introduction of YOLO (You Only Look Once), object detection was a multi-stage pipeline. Systems like R-CNN used region proposal algorithms to identify potential objects, followed by individual classification and refinement steps. This complexity made real-time detection impossible. YOLO fundamentally reframed object detection as a single regression problem, mapping raw pixels directly to bounding box coordinates and class probabilities in a single forward pass. By "looking only once," the model achieved unprecedented speeds, enabling the transition of computer vision from static image analysis to real-time video understanding.

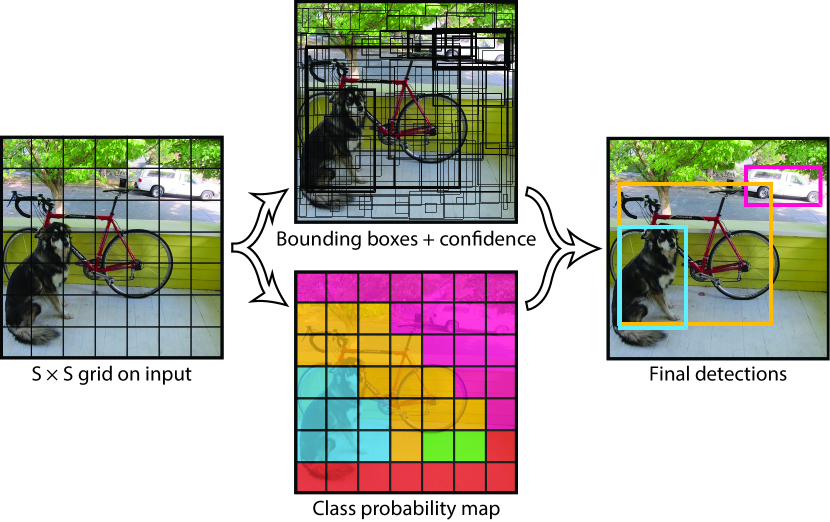

The SxS Grid and Global Context

The core innovation of YOLO is the spatial discretization of the image. The model divides the input image into an grid (typically ). If the center of an object falls into a grid cell, that cell is responsible for detecting that object. Each cell predicts a fixed number of bounding boxes (typically ) and the confidence scores for those boxes. Unlike sliding window or region-based methods, YOLO processes the entire image at once. This global receptive field allows the model to learn the contextual relationship between objects and their backgrounds, significantly reducing "false positive" detections in complex scenes where background patches are mistaken for objects.

The Anatomy of a Prediction: [x, y, w, h, C]

For each bounding box, YOLO predicts five primary values: the coordinates , the dimensions , and a confidence score . The coordinates represent the center of the box relative to the bounds of the grid cell, while are predicted relative to the entire image. The confidence score reflects how certain the model is that the box contains an object and how accurate it thinks the box is (calculated as ). Simultaneously, each cell predicts conditional class probabilities. During inference, these are multiplied by the box confidence scores to produce class-specific confidence scores for every box, allowing for efficient filtering of low-signal detections.

The Multi-Part Loss Function

Training a unified detection model requires a complex loss function that balances localization accuracy with classification precision. YOLO utilizes a sum-squared error loss that is divided into three functional components:

- Localization Loss: Penalizes errors in the predictions, with a higher weight () to prioritize spatial accuracy.

- Confidence Loss: Penalizes the model when it predicts high confidence for empty cells or low confidence for cells containing objects. To handle the fact that most cells in an image are empty, the loss for "no-object" cells is downweighted ().

- Classification Loss: Penalizes incorrect class predictions for cells that contain an object.

This composite loss ensures that the model learns to prioritize the "how many" and "where" of detection with equal clinical focus.

Real-Time Architecture: 24 Convolutional Layers

The YOLO architecture is inspired by GoogLeNet, consisting of 24 convolutional layers followed by two fully connected layers. The initial layers extract high-level features from the image, while the fully connected layers predict the final output tensor (a tensor for Pascal VOC). This streamlined design allows the "Fast YOLO" variant to process images at 155 frames per second (FPS), while the standard model maintains 45 FPS - well above the threshold required for real-time video processing on modern hardware.

The Localization Trade-off

While YOLO excels at speed and global context, its spatial constraints impose certain limitations. Because each grid cell can only predict a limited number of bounding boxes and only one class, the model struggles with "flocks" - groups of small objects that are close together, such as a swarm of bees or a crowd of people. Furthermore, because the loss function treats errors in small and large boxes equally, the model can exhibit localization inaccuracies compared to slower, multi-stage detectors. Despite these trade-offs, the shift toward unified detection proved that in computer vision, the efficiency of a single, integrated path is often superior to the complexity of a fragmented pipeline.

Dive Deeper

You Only Look Once: Unified, Real-Time Object Detection (Original Paper)

arXiv • article

Explore ResourceYOLO: Real-Time Object Detection (Project Page)

Darknet • docs

Explore ResourceA Brief History of YOLO

Machine Learning Mastery • article

Explore ResourceYOLOv1 Research Paper Walkthrough

YouTube • video

Explore Resource

Discussion

0Join the discussion

Sign in to share your thoughts and technical insights.

Loading insights...

Recommended Readings

The author of this article utilized generative AI (Google Gemini 3.1 Pro) to assist in part of the drafting and editing process.